This article focuses on the development of a community of assessment practice which aimed to ensure that all assessors were consistent with their assessment decisions. The community sought to enable students and assessors to be active participants in a feedback loop, and this research investigated the role that the online feedback tool Grademark could play in creating that loop. The study focuses on one of three BA (Hons) Film Production units at UCA Farnham where three staff assessed over 500 individual pieces of work during the 2017-2018 academic year. Concentrating on a Year 1 unit with two summative assessment points, the article discusses how Grademark was used to foster a more collaborative and participatory approach to providing, receiving and actioning summative essay feedback.

Published on 18th October 2018 | Written by Carol Walker | Photo by Jakob Owens on Unsplash

‘A grade can be regarded only as an inadequate report of an inaccurate judgement by a biased and variable judge of the extent to which a student has attained an undefined level of mastery of an unknown proportion of an indefinite amount of material.’ (Dressel, 1957:6, cited in Rust, 2017)

Introduction

The research which forms the focus for this article looked at the role that Grademark and one-to-one tutorials can play in changing teaching staff’s perceptions of assessment, and more importantly summative feedback, on three BA (Hons) Film Production units. A review of the course in 2016-17 introduced decreased and streamlined unit aims, learning outcomes and assessment criteria. This meant that less, but better, summative assessment could be provided that should explicitly link to learning outcomes – especially BA (Hons) Film Production course learning outcomes as well as specific unit ones – and the ‘assessment of integrated learning (what the PASS project called programme focussed assessment)’ (Rust. 2017).

Rust (2017) was also useful in thinking about ‘creating a community of assessment practice’, about which he quotes this: ‘consistent assessment decisions among assessors are the product of interactions over time, the internalisation of exemplars, and of inclusive networks. Written instructions, mark schemes and criteria, even when used with scrupulous care, cannot substitute for these’ (HEQC, 1997). This seems to add another dimension to the emphasis of the QAA and UCA quality assurance department which is on standardised mark schemes and assessment criteria, written instructions and written feedback. I wanted to explore how the use of Grademark could help bridge these dimensions, and at the same time help to provide some parity of experience for assessors and increased accessibility and transparency of process for students. It became clear that assessors and students needed to be active participants in the feedback cycle, for ‘participation as a way of learning enables the student to both absorb, and be absorbed, in the culture of practice’ (Elwood & Klenowski, 2002: 246). Using Grademark with one-to-one tutorials provided an ideal way of achieving this.

Attitudes Towards Feedback on the Film Production Course

Students are very critical of feedback practices in Higher Education (HE), particularly in Art and Design settings, and tend not to engage with it (Price et al, 2010; O’Donovan et al, 2015). Studies show that the passive receipt of feedback has little effect on future performance (Hounsell, 1987; Fritz, 2000; MacLellan, 2001) and others show that dialogue and participatory approaches are key to engaging students with their feedback – feedback that takes assessors a long time to write. I needed to find a way to lead the team into a new culture of practice around the feedback process. As a team of theoreticians, I felt that contexualising change using theoretical evidence could be a productive approach.

In our units, too often feedback for subsequent tasks would state that ‘you do not seem to have read your previous feedback’, and the feeling was that students were not going to read this feedback either. Our notion of feedback seems to rest on assumptions and definitions that feedback is perceived as ‘a key indicator of the effectiveness of the whole course of study’ (Boud and Molloy 2013:707). I began to look for better definitions of feedback that describe it as a process or activity so that I could use theory and definitions to help change the mindset of unit tutors assessing and providing work on the units I lead. This one was particularly useful from Boud and Molloy (2013:702): ‘if the term feedback is used, rather than simply information, there needs to be a way of detecting that there has been an effect in the direction desired. The cycle of feedback needs to be completed. If there is no discernible effect, then feedback has not occurred (…) feedback involves information used, rather than information transmitted.’ I wondered if Grademark could help me here.

I found more academic evidence to support my case (HEA, 2012) that I hoped colleagues would appreciate and most likely respond to. It pointed me towards ideas about feedback being essential to the student experience that helps learning (Parkin et al 2012; West and Turner 2015), encourages teacher-student interaction, and learner engagement (Nicol 2010) and that good timely feedback is a benchmark of a high-quality student experience (Lizzio and Wilson, 2008). NSS results suggest feedback is an area where UCA does not perform strongly enough and many universities have implemented strategies to improve feedback which oftenn involves technology facilitated learning (HEA, 2012).

So, I used this arsenal of theory and evidence to help me persuade the other tutors that using Grademark for assessment and feedback would be a good thing in a wider sense. Then in a more local context, and to start a conversation about more sensitive issues about feedback content, I found Li and De Luca (2014:380) very useful in broaching the subject: ‘barriers to effective feedback were found mainly in three aspects: firstly, the modular programmes constrain the tutors to provide timely feedback that could be applied by students to the improvement of the next assignments; secondly, feedback often focused on grammatical errors or negative aspects of students’ work without suggestions on how to make improvement; finally, the language of feedback was general or vague.’

It was also becoming clear to me that students need to actively engage with feedback, and they need to be trained to do so (Sadler, 1989:79; cited in Boud and Molloy, 2013:702) and so did the tutors who were used to returning feedback to students and that being the end of the process. I felt that a feedback cycle and loop needed to be created as described by Boud and Molloy, who argue that ‘the role of learners as constructors of their own understanding needs to be accepted’ (2013:703). The authors note the importance of identifying ‘the shape of an approach to feedback that not only respects students’ agency in their own processes of learning, but can develop the dispositions needed for identifying and using feedback beyond formal educational structures’ (2013:704). So how could the use of Grademark help effect a culture change in student and staff engagement and create a more productive feedback cycle?

Adopting Turnitin / Grademark for Assessment and Feedback

In academic year 2016-17, the Year 1 and 2 units that I was involved with used Grademark for providing students with feedback on their written work. This meant that, for the first time, students were able to view their assessed work, feedback and mark in one place. Since 2015, we’d been assessing on Grademark and giving QuickMark comments on students’ work, but the feedback was provided in the traditional way on a standard assessment feedback form (a Word document). The unit leader had to convert each form to PDF and send them to their Campus Registry administrator, who then uploaded each file individually to a separate area on the University’s Virtual Learning Environment (myUCA) for students to view.

The essays with comments on, the feedback (which contained the mark where appropriate) and the online marks area on the ‘myRecords’ area of myUCA meant that students needed to look in three separate places for information about their assessment performance. I noticed that the essay with its comments and the feedback area on myUCA were the least looked at places, as students were more interested in the mark and many only looked at myRecords. Having their work, feedback and marks in one place on Grademark meant that students and tutors could view work online or download it as a PDF, then save and print all the information about their work in one place. Using myUCA and Grademark means that assessed work is accessible at any time so we can look at previous essays with students very quickly and easily in tutorials. So there were definite practical administrative and pedagogic advantages to using Grademark in this way.

There had been much resistance from staff generally to using Grademark for assessment and feedback. Staff were worried about their eyesight and about the security and stability of myUCA etc. The default QuickMark comments were scoffed at (see Fig.1 on left), and there were concerns about the Rubric being the first step in a move to assessors having to grade each assessment criteria separately to facilitate a computer-generated aggregated unit grade at the end.

In short, a move to assessing and providing feedback on Grademark was change, and change was resisted. I’d had some issues with the mailing of work to sessional staff, and the time that work spent in the post was starting to impact on us being able to the meet the 4-week turnaround time for marking.

So, due to a misunderstanding on my part, I decided that as unit leader, we’d assess and provide feedback in the one place on Grademark because I thought that we had to. Paper feedback would no longer be provided, and we would stop asking students for printed copies of their essays – the numbers were so large that I and Campus Registry were struggling to keep track. From the research I’d done and my experiments with Grademark, I could see the benefits timewise and quality-wise of switching wholesale to online marking.

I experimented with Grademark’s methods of adding comments to the essays (see Fig.2 below), and in January 2018 asked students in post-feedback debrief tutorials what they preferred. The majority said text as shown in the red ringed area in Fig.2. Because they view their feedback on phones etc, they did not like to click on blue speech bubbles or QuickMark comments to reveal comments – and many said they hadn’t seen them. All read the text comments. Many struggled with the GradeMark rubric, many couldn’t find it, and because the student interface is not the same as the tutors’ I was unable to demonstrate effectively – I am working on resolving this with our Learning Technologist and am to developing a guide for students and staff. The outcome of discussions with students about the preferred method for adding comments to their work has been shared with the other assessors, and also with tutors assessing other Film Production units using Grademark for the first time. I have been working with them to help them use Grademark efficiently for assessing and effectively feeding back to students.

Consistency and effectiveness of feedback

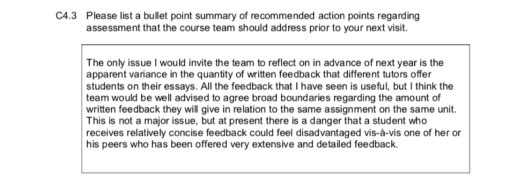

An issue of disparity between assessors had been raised in our External Examiners’ reports since 2014-15 (see exerpts below), and in the our Internal Student Survey (ISS) for 2015-16, in terms of the amount of feedback given. Concerns were also raised regarding the helpfulness of feedback as there was still a tendency to over emphasise spelling and grammar. I experimented with developing and sharing banks of assignment-specific QuickMark comments about standard issues directly related to the unit’s assessment criteria, such as referencing, what to include in an introduction etc. to help promote consistency between assessors. However, this has not been successful as some tutors prefer to create their own, use the default ones, or write their own text – and of course, we are assessing essays… and we were still only providing what could be described as ‘…a better monologue (…when…) feedback must be seen as a dialogue or a conversation’ (Nicol, 2010).

However, simply having raised awareness of the issues and entering into this dialogue with staff, the work towards a much more consistent approach is paying off (see Figs. 3-6 below). This is particularly evident in the 2017-18 External Examiner’s report which evidences that change has been effected, which is in part a result of moving to online assessment and feedback:

Our initiative to close the feedback gap by switching to one-to-one feedback tutorials were introduced in 2017-18 for Year 1 students after their first assessment, and these proved to be very successful. Staff had in the past been very reluctant to meet students to discuss feedback, believing that the written narrative and the comments on the work were enough. But because one assessor continued to have to write ‘you have not read your previous feedback’, it seemed obvious that a quick 10-minute high impact tutorial where students showed us they’d accessed the feedback was worth it. During these tutorials, we discussed any questions they had about their feedback, and crucially we talked about the next assessment and how to carry points forward.

The feedback from tutors about this was very positive because they could see that active learning was taking place, and they were appreciative of their role in that process. This has had some impact on the type of feedback that was then produced for the second assessment, as revealed in the end-of-unit evaluation meeting. During this meeting, one tutor remarked on how gratifying it was to see how a student’s work could develop as the result of taking on board feedback about essay 1 that was reinforced during the tutorial.

Conclusions

As Carless et al. (2011) have emphasised, students need to engage in dialogue about monitoring their own work, identify what constitutes appropriate standards of judgement, and plan their own learning if they are to determine what constitutes quality performance and enact it. Dialogue is also needed to help students interpret standards and criteria, and discern how these are manifested in their own work and that of others (Boud and Molloy, 2013:708-9). Tutors on the units I lead are now beginning to engage with this and value both the process and the time investment. This has also positively impacted on overall unit performance and has resulted in some very positive comments in the 2017-18 ISS:

“The film lectures are amazint, they’re interesting, informative and cover a wide range of cinematic topics so there is something for everyone. Feedback for written work is constructive with lots of positive reinforcement.”

“The essay has helped me with my writing skills. Feedback is the most helpful aspect.”

Nicol (2008) states that ‘many researchers maintain that dialogue is an essential component of effective feedback in higher education (…) yet mass higher education has reduced opportunities for individualised teacher-student dialogue.’ I think that the use of Grademark for assessment and feedback coupled with follow-up tutorials have created an essential space for this dialogue, and going forward I will be looking for opportunities to provide more of this valuable activity.

Carol Walker is Senior Lecturer in Film Production at the University for the Creative Arts in Farnham and unit leader for Screen Studies 1, Screen Studies 2 and Extended Research Project. Much of this article was originally written as part of a successful Senior Fellowship of the Higher Education Academy application.

References

Boud, D. and Molloy, E. (2013) Rethinking Models of Feedback for Learning: The Challenge of Design. Assessment & Evaluation in Higher Education. 38(6), 698-712

Carless, D., Salter, D., Yang, M. and Lam, J. (2011) Developing sustainable feedback practices. Studies in Higher Education. 36(5), 395–407

Elwood, J. and Klenowski, V. (2002) Creating communities of shared practice: the challenges of assessment use in learning and teaching. Assessment and Evaluation in Higher Education. 27(3), 243-256

Fritz, C.O., Morris, P.E., Bjork, R.A., Gelman, R. and Wickens, T.D. (2000) When Further Learning Fails: Stability and Change Following Repeated Presentation of Text. British Journal of Psychology. 91(4), 493-511

Higher Education Academy (2012) A Marked Improvement: Transforming Assessment in Higher Education. York: HEA. [online] At: https://www.heacademy.ac.uk/system/files/a_marked_improvement.pdf (Accessed on 22/07/2018)

Hounsell, D. (1987) Essay Writing and the Quality of Feedback. In: Richardson, J. T. E. & Warren-Piper, M. (eds) Student Learning: Research in Education and Cognitive Psychology UK. Milton Keynes: SRHE / Open University

Li, J. and De Luca, R. (2014) Review of Assessment Feedback. Studies in Higher Education. 39(2), 378–393

Lizzio, A. and Wilson, K. (2008) Feedback on Assessment: Students’ Perception of Quality and Effectiveness. Assessment and Evaluation in Higher Education. 33(3), 263-275

MacLellan, E. (2001) Assessment for Learning: The Differing Perceptions of Tutors and Students. Assessment and Evaluation in Higher Education. 26(4), 307–318

Nicol, D. (2010) From Monologue to Dialogue: Improving Written Feedback Processes in Mass Higher Education. Assessment and Evaluation in Higher Education. 35(5), 501-517

Nicol, D. (2008) Technology-supported Assessment: A review of Research (Unpublished Manuscript) [online] At: https://www.reap.ac.uk/Portals/101/Documents/REAP/Technology_supported_assessment.pdf (Accessed on 22/07/2018)

O’Donovan, B., Rust, C. and Price, P. (2015) A Scholarly Approach to Solving the Feedback Dilemma in Practice. Assessment & Evaluation in Higher Education. 41(6), 938-949

Parkin, H.J., Hepplestone, S., Holden, G., Irwin, B. and Thorpe, L. (2012) A Role for Enhancing Students Engagement with Feedback. Assessment and Evaluation in Higher Education. 37(8), 963-973

Price, M., Rust, C., O’Donovan, B., Handley, K. and Bryant, R. (2012) Assessment Literacy: The Foundation for Improving Student Learning Oxford: Oxford Centre for Staff and Learning Development

Rust, C. (2017) Re-thinking assessment – a programme leader’s guide [online blog] In: Brookesblogs At: http://ocsld.brookesblogs.net/2017/12/22/re-thinking-assessment-a-programme-leaders-guide/ (Accessed on 21/07/2018)

West, J. and Turner, W. (2015) Enhancing the Assessment Experience: Improving Student Perceptions, Engagement and Understanding Using Online Video Feedback. Innovations in Education and Teaching International. 53(4), 400-410

One Reply to “”